Algorithms in the AI era

How to deal with software development risks according to the EU AI Act?

In 2019, a Dutch blog on Three Codes Of Conduct in AI was previously released (Three Codes Of Conduct in AI). This blog looked at three different codes of conduct that existed on AI at the time. Four years later, some of these guidelines are about to become legislation, so it is time for an update.

The AI Act

In June 2018, the European Commission came out with their first version of guidelines, which they finalised in April 2019. These guidelines have since been made into rules which are now waiting for approval at the European Parliament. These are called the "EU AI Act" and would be the world's first comprehensive AI law. The first version of these rules was proposed in April 2021.

These laws will apply to all users and creators of AI systems in the EU, as well as AI systems made outside the EU but used within the EU. There are some exceptions for military systems and law enforcement.

The purpose of these laws is to ensure that AI systems in the EU are safe, transparent, traceable, non-discriminatory, and environmentally friendly. In addition, they must always be controlled by humans and there will be a clear definition of AI to know when these rules apply. These points were all also mentioned in the 2019 blog and version of the AI Act.

The final definition of an AI system is not clear at this time, but it is likely to be any system that uses machine learning, logic, and knowledge-based systems, or statistical approaches.

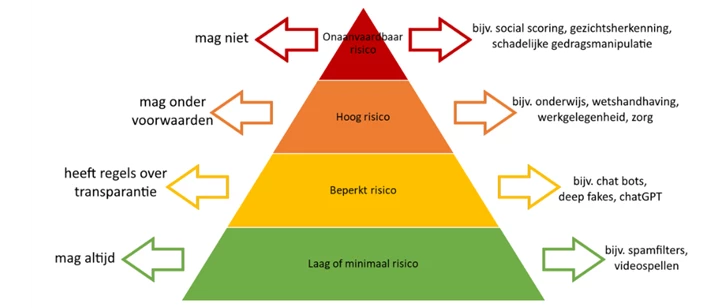

An important addition since the 2019 version is the added risk levels: the latest version has different rules depending on where the algorithm is used and what for. The more risk there is to a system, the stricter the rules it must adhere to.

Unacceptable risk

You are not allowed to create algorithms with harmful cognitive behavioural manipulation or algorithms that take advantage of specific minority groups. Nor should an algorithm be used for 'social scoring', where people are given a score depending on behaviour, status, or personal characteristics. In addition, real-time and remote biometric identification is also banned, which includes facial recognition. In the case of facial recognition, there are several exceptions, which may vary from one member state to another.

High risk

These algorithms may be used, but there are many conditions. High risk includes two categories:

- AI systems for products already covered by EU safety legislation such as toys, cars, medical devices, etc.

- AI systems in the following eight subcategories:

- Biometric identification and categorisation of natural persons

- Management and operation of critical infrastructure

- Education and vocational training

- Employment, worker management, and access to self-employment

- Access to and enjoyment of essential private services and public services and benefits

- Law enforcement

- Migration, asylum, and border control management

- Assistance in legal interpretation and application of the law

All high-risk systems should be registered in an EU database before they are put into operation. If there is already safety legislation, they must comply with existing legislation. If there is no legislation yet, these systems must test their own system to see if they comply with the new requirements, after which they can use the CE mark. Only systems that use biometric information must be tested by an approved body.

These systems also have rules on data, documentation and traceability, transparency, human supervision, accuracy, and robustness.

Limited risk

These rules count for systems that interact with humans (such as chatbots), systems with emotion recognition, biometric categorisation systems, and AI systems that generate or manipulate photos, videos, or audio, such as deep fakes.

These systems are subject to a limited set of rules on transparency, allowing users to make their own judgements on their use. Users should only be informed that they are dealing with an AI.

Generative AI systems like ChatGPT are mentioned separately. These systems should also make it clear that the content is generated by AI, but in addition, it is explicitly mentioned that the system should be designed so that it does not generate illegal content and there should be a summary of which training data is copyrighted.

Low or minimal risk

All systems that do not fall into the above categories have no rules. However, they are encouraged to adhere to the codes of conduct.

What’s next?

The European Parliament hopes to have a final version of the AI Act by the end of 2023 so that it can be put into use. They do recommend at this point already to take this regulation into account while making AI systems, as a large part of these rules will eventually become legislation.

Links for more information

Artificial intelligence act

EU AI Act: first regulation on artificial intelligence

More blog posts

-

Secure Your Software by Thinking Like a Hacker

To properly secure software, you must understand how hackers operate. Ethical hacking helps identify vulnerabilities in systems, processes, and human behavior.Content typeBlog

-

Exploring the essentials of professional software engineering

Jelle explored what defines a professional software engineer and shared insights from personal experience. Below is a brief recap of the topics he discussed.

Content typeBlog

-

The Software Engineer Oath

This final entry reflects on the full software engineering series, revisiting key topics from code quality to ethics, teamwork, professionalism and the newly proposed Dijkstra’s Oath for responsible engineering.

Content typeBlog

Stay up to date with our tech updates!

Sign up and receive a biweekly update with the latest knowledge and developments.