Artificial Intelligence

An overview of the professional field in 2018.

On hearing the term 'Artificial Intelligence' (AI), most people have an image in mind of intelligent robots. Well-known examples are, of course, the Terminator movies, but also films like A.I. and Ex Machina.

Of course, if these kinds of robots (with human characteristics) did exist, they would fall under the heading of AI. However, the field of AI is much larger than just these robots.

In this blog, I will explore the field of AI in more detail. I will discuss briefly the different sub-areas of AI, and the most frequently mentioned techniques and methods. This is followed by several examples of how AI can now be used practically in industry. Lastly, I will deal with the possible dangers of a real AI application.

What is Artificial Intelligence?

One could simply say that AI is defined as follows: intelligence displayed by machines. Your follow-up question might then be 'What is intelligence?' And this is where much confusion arises. One contributing factor is the AI Effect.

This is the effect that if it turns out that a computer/machine can perform a certain task, which people thought could only be performed by intelligent people, it will later be labeled as not really intelligent after all.

One example is chess: for centuries, good chess players were seen to be highly intelligent, but as soon as DeepBlue defeated the world chess champion (Garry Kasparov), people said: 'Ah yes, but the computer does it with brute processing power, that's not really intelligent.' In short, chess was re-evaluated as a task that can be done either by intelligence or brute processing power. And therefore regarded as being less intelligent as a task.

Subsequently, in the Asian world, it was stated that chess is less intelligent than the board game Go. When in 2016 the programme also beat AlphaGo Go masters, this led to a re-evaluation of the intelligence surrounding board games overall compared to general intelligence. The reason seems to be that computers are very good at certain tasks and at the same time are unable to or are barely able to other things that people do every day.

Douglas Hofstadter, an American professor of cognitive science, has summarised this theory as: 'AI is everything that hasn't been done yet'.

Here are some examples of activities that are very difficult for most people, but are easily performed by certain computers:

- Beating grandmasters in board games like chess and Go

- Mathematics and complex calculations

- Performing massive numbers of calculations simultaneously

- Searching in gigantic amounts of data

- Accurately controlling and adjusting a process (for example keeping a drone in its place)

And here are some tasks that are common and simple for humans, but still almost impossible for computers to do:

- Looking in 3D and recognising what’s seen

- Understanding spoken and written language

- Expressing a sense of humour

- Reasoning and solving problems

- Performing creative processes (arts/ideas/new solutions)

- Taking part in social interaction

This makes it difficult for many people to estimate what the computer (AI) is or is not good at. By way of illustration, here is a blog that clearly shows what happens (in our heads of course) when we look at a funny picture of Barack Obama.

Strong and weak AI

The definition of strong AI is a computer that can successfully do any intellectual task that humans can also do. In short, this is a generic general intelligence that can be applied to all kinds of areas. For example, analysing a company's problem and finding a suitable solution for it.

Weak AI is a system that shows intelligence in a very limited area. In such a case, it appears that outside this area, the AI application contains no intelligence. An example is the AlphaGo algorithm that can play Go very well but cannot hold a simple conversation.

The progress of AI in recent decades has mainly taken place in the area of weak AI. Most AI researchers agree that achieving strong AI requires more than just scaling up weak AI methods or simply combining them.

Breakdown of AI areas

In the AI world, we distinguish different domains of research. These are areas (problems) where AI can still make gains:

- Reason, logic, and problem solving

- Representing knowledge

- Planning

- Learning

- Processing natural language

- Observation

- Movement

- Social intelligence

Here is a brief explanation of each of these areas.

Reason, logic, and problem solving

This branch of AI is about formal logic and how a computer can perform logical reasoning. The field investigates the various logics with their advantages and disadvantages. Meanwhile, insights have been gained into how to reason with uncertain and incomplete knowledge. But in formal and traditional logics, we quickly run into a problem: there are countless possibilities.

Suppose you have a certain problem (an impasse) and want to solve it with logic. You can apply a lot of logical rules in many parts of the problem. That can lead to a very large number of possible ways of reasoning containing logical steps. You will have to use other techniques (deep neural networks, for example, but more about that later) to decide which part of the problem should be tackled with which logical rule.

Representing knowledge

How can we represent human knowledge so that it can be used for automatic reasoning and searching? Knowledge must be structured in a certain way, in an Ontology language, for example. At present, it’s easier for people to see knowledge in context than it is for the computer.

We understand the statement 'as free as a bird' because we easily associate the idea that most birds can fly with a feeling of freedom. Logically speaking, there’s nothing wrong with being 'as free as a penguin', but we know that their inability to fly makes penguins less free than the average bird. Through our general knowledge, reasoning, and empathy, this is intuitively obvious, but a computer stumbles over it. A penguin is a bird so ‘as free as a penguin’ implies ‘as free as a bird’.

Planning

Determine and prioritise the goals to be pursued. Difficulties arise when other agents (other computers or people) are also acting in the same domain. One of these other agents may confuse previously made plans resulting in a plan, or part of it, having to be revised. Especially in the case of very complex tasks involving many players, communication and a general understanding of the domain and its possibilities are needed to arrive at a good plan.

Learning

In learning, we distinguish three main forms.

Controlled learning

Here, the algorithm learns through large amounts of training data, with the answer being given for each training item. For example, we give the algorithm thousands of images with and without traffic signs and indicate which image does or does not contain a traffic sign. Then the algorithm learns to recognise traffic signs from a picture. This form of learning is most commonly found in the various weak AI systems and services.

Uncontrolled learning

The algorithm itself must make connections and recognise patterns in the input data. No examples are given of what is to be learned. The algorithm itself comes up with patterns and connections between properties in the data.

Reward-driven learning

Here the algorithm itself has to learn how to behave in order to maximise a certain reward function. For a board game, winning the game might be the reward. And playing against themselves or other algorithms parts of the algorithm try to adjust themselves so that the chance of winning improves. The algorithm is therefore not literally told what it needs to learn, but it does have a function for the learning algorithm to focus on.

Processing natural language

This involves understanding sentences and being able to translate texts automatically. The consensus is that overall strong AI is needed to perform this task at the level of human language processing. However, Recurring Neural Networks are well on their way to overcoming this problem.

Observation

This includes speech recognition. Recreating text from spoken language. We also talk about object recognition; being able to reconstruct a 3D scene or object from sensory input. Recognising the objects, what they are undergoing and what movement they are making, and consequently predicting what will happen to the environment in the future.

Movement

Planning various artificial muscles (motors) or other actuators to create fluid and efficient movement. Sensors play a part in this. Sensors that can measure precisely the position and attitude of the entity we are moving. In addition, the ability to predict movement in the surroundings and to plan one's own movement, or correction of that movement.

Social intelligence

This is about what it takes to be able to communicate credibly with people and to be able to predict in some way what the next step in the social interaction will be. A crucial part of this is being able to reason about human emotions and motives so that the AI application can better determine what effect certain behaviour or statements have on people.

Methods and techniques

Let’s go on to discuss the following important techniques from the professional field of AI:

- Classification and statistical methodologies

- Search and optimisation

- Logic

- Methods based on probability

Classification and statistical methodologies

These methods are designed so that certain correlations can be learned on the basis of data. In the case of classification, we're talking mainly about controlled learning as discussed earlier. Many techniques are available to support this way of learning, of which neural networking is the most common.

Neural networks

Neural networks are made by analogy with the brain. They are networks of cells that are interconnected. We have input cells where we feed the input. We also have output cells (or one output cell) from which we read the output.

With a specific input pattern, some cells will respond and others will not. Between the cells, there are connections with weights (often between -1 and 1). Each cell also has a threshold value. If the sum of all signals to the cell exceeds a certain threshold, the cell will respond.

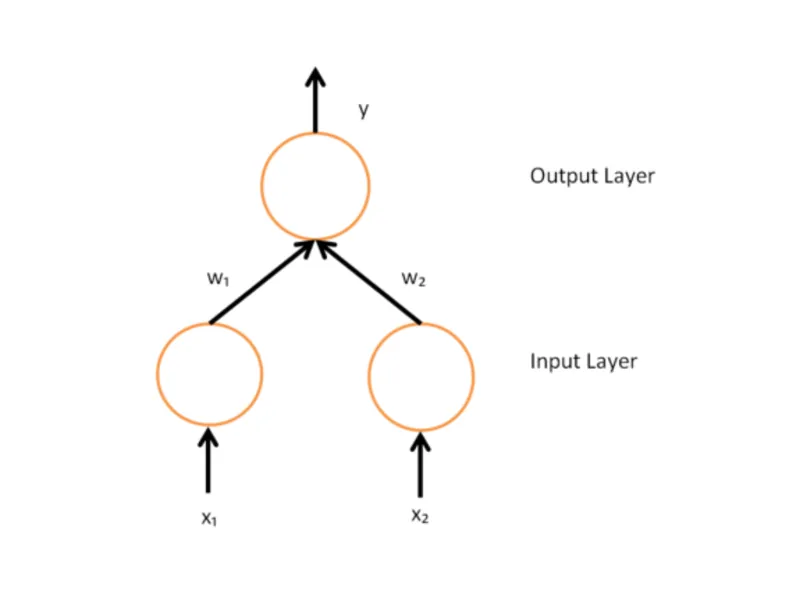

Here is a simple neural network of 2 input cells and only 1 output cell.

Suppose we have cell X1 and cell Y interconnected. The weight of this compound is called w1. If w1 is large (towards the value of 1), then the reaction of cell X1 has a positive effect on the reaction of cell Y. With a w1 of almost 0, the reaction of cell X1 has little effect (is neutral). And if w1 is very negative (towards the value of -1), then the reaction of cell X1 will have an extinguishing effect on the reaction of cell Y. In the latter case, cell Y would still be able to respond if other cells (such as X2 in the illustration) did have a positive influence.

With a process called backpropagation, the connection weights are adjusted. Ultimately, the neural network learns the correct output pattern for a given input pattern.

Neural networks are (up till now) the most successful technique for acquiring 'non-symbolic knowledge'. This refers to knowledge that cannot be directly expressed in logical terms. Knowledge of which human experts say: 'I have a feeling that it is like this and like that'. For example, a chess/Go player may say: 'This move doesn't feel right'. This means that this person, with all their experience, has a pattern somewhere that indicates that it might be a bad move, without having an immediate argumentation/evidence/calculation to substantiate it. This kind of knowledge is what neural networks excel at.

Deep learning

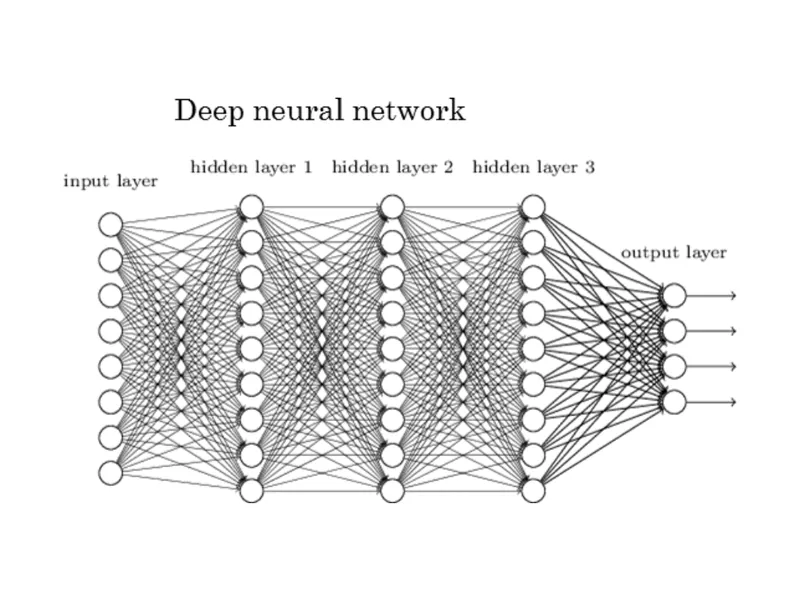

Een diep neuraal netwerk onderscheidt zich doordat het extra veel lagen en dus neuronen in het netwerk heeft. Het volgende plaatje geeft een diep neuraal netwerk weer.

This means that the learning strategy must be thought through, and it may also be the case that the layers are trained separately. Certain types of deep neural networks (convolutional neural networks) are very good at image recognition. The programme that defeated the Go masters also contains, among other methodologies, a deep neural network.

Deep recurrent neural network

In the 'deep recurrent neural network', the neurons are not strictly in layers where signals are passed from input to output. But somewhere there are neurons in a graph structure. The advantage of such a network is that there will be a memory that can remember something about the input for the next input.

Search and optimisation

Many AI problems can be directly translated into finding solutions in a large search space. That is why search and optimisation within search spaces are an important part of AI. These can be quite simple search functions, such as a function that looks at all nearby points (solutions) of an arbitrary point in the search space and moves to that new point if the solution is better. This is called hill climbing. Hill climbing is a simple local search algorithm, which very quickly gets stuck on a local maximum in the search area. Other search algorithms look less locally and get stuck less easily. Examples of less locally searching algorithms are simulated annealing and evolutionary algorithms.

Evolutionary algorithms

Evolutionary algorithms are also methods for searching in a large search space. Here, the evolutionary process, as described by Darwin, is imitated on a small scale in order to find a good solution. The algorithm is then encoded in a digital structure, just as the structure of many biological beings is encoded in their DNA.

We start with a set of individuals (assemblies of genes) and test these individuals against the task to be learned. This determines how fit a particular individual is. Techniques such as mutation, crossbreeding and selection are then used to create a new set of individuals, each with their measured fitness.

Such a coded individual can be seen as a point in the search space (of all possible DNA mutations) where we look for a better solution to a certain task. A task can be very small, like playing tic-tac-toe better, or very large, like surviving in a complex system with other organisms and ever-changing conditions.

It is important that there will be a good balance between differentiation (introduction of new mutations) and selection (the individuals must become better at the task).

Logic

There are many kinds of logic. In the various classical AI systems, one or more of these logic forms are used. Possible forms of logic include:

- Proposition logic

- Predicate logic

- Temporal or time logic

- Modal logic

These are common logic forms for classical AI systems, where a statement is (at a given moment) either true or false. Besides regular logic, you also have to deal with fuzzy logic. The aim here is to see how we can reason with vague notions (grey values) rather than strict notions (black and white).

Methods based on probability

In the AI world (as in real life) there are many situations where the knowledge is incomplete, or it is uncertain whether the knowledge is really true. The probability-based methods try to provide tools to deal with these uncertainties. There are many different methods that fall under this banner, among others:

Practical applications

AI is now used in many products and services. Some important examples:

- Self-driving vehicles (self-driving cars, drones)

- Medical diagnosis

- Proofs of mathematical theorems

- Search engines

- Computer assistance, such as Siri and Cortana

- Spam filtering

- Reaching the right target groups with online marketing

AI as a Service

More and more large companies are now offering services based on AI. For example, Microsoft has various services (cognitive services) with which to estimate the faces, emotions, gender, and age of people in a photo. These services can be used by developers to do something with the data in their own applications.

Google, for its part, has the Vision and Natural Language APIs.

Potential danger of AI

In recent years, several AI researchers, as well as well-known visionaries such as Bill Gates, Stephen Hawking, and Elon Musk, have expressed their concern in the media about the possible emergence of super-intelligence. This phenomenon is also known as Technical Singularity.

This is a common theory that states that if an AI application is given the same level of intelligence as a human being, this AI application will only have this level of intelligence for a very short time. The idea is that an application with this intelligence will quickly find ways to raise its intelligence level. Much faster than humans can learn. In short, an intelligence explosion resulting in super-intelligence.

Our brains are shaped by millions of years of evolution and are very difficult to adapt or expand. That’s not the case for an AI application. It will certainly be possible for a strong AI to incorporate the weak AI techniques that already exist. Scaling up brainpower across multiple parallel computer systems should be relatively effortless. And with data storage and search algorithms, an ironclad memory of many Petabytes can soon be achieved. In addition, this AI application will not need to eat or sleep and can therefore use all its brainpower in a parallel way to further design and improve itself, or its successors.

This tremendously high intelligence makes it possible for the AI application to create super-smart weapon systems and consequently acquire unbeatable power. For now, it seems that strong AI is still quite far away but let’s hope that when the era of strong AI comes, we will have a friendly variant to contend with.

More blog posts

-

Threat modeling: from vulnerability to control

Threat modeling helps identify and manage risks early by analyzing threats, prioritizing them, and integrating security into the development process to prevent issues.Content typeBlog

-

Securing web applications and the OWASP Top 10

The OWASP Top 10 for 2025 highlights where web applications are truly vulnerable today and how you, as a developer, can tackle those risks in a targeted manner.Content typeBlog

-

Secure Your Software by Thinking Like a Hacker

To properly secure software, you must understand how hackers operate. Ethical hacking helps identify vulnerabilities in systems, processes, and human behavior.Content typeBlog

Stay up to date with our tech updates!

Sign up and receive a biweekly update with the latest knowledge and developments.